From Pressing Buttons to Intent-Driven Flow

How I went from managing AI agent context windows by hand to building an orchestration system that lets me describe what I want and walk away.

Sekou M. Doumbouya

The views expressed here are my own and do not represent those of any current or former employer.

I spent an entire weekend building a 44,000-line TypeScript monorepo with eight AI agents. That’s not the interesting part. The interesting part is what I was doing the whole time: pressing buttons.

Not metaphorical buttons. Literal approve-and-continue buttons in my terminal. Every time an agent finished a task, I had to review the output, decide what to dispatch next, paste context from the last agent into the next agent’s prompt, and hit enter. For twelve hours straight. I was the bottleneck in my own autonomous system.

The agents were fast. The agents were good. But I was the message bus, and I was slow.

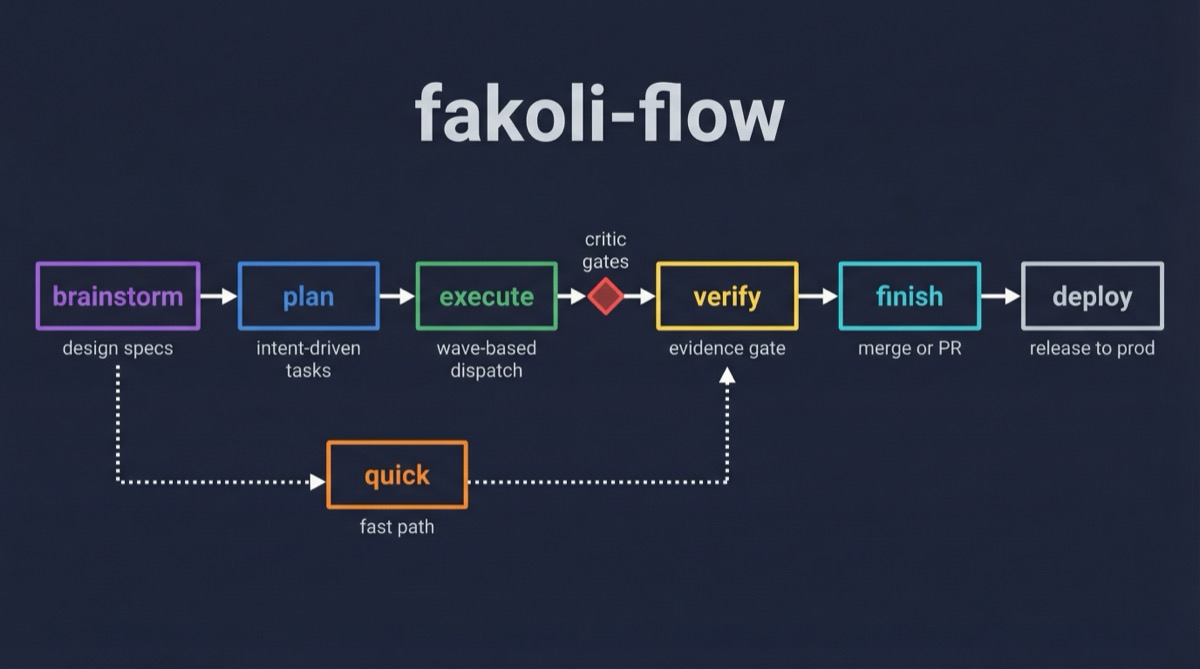

That weekend is what made me build fakoli-flow.

The Context Window Tax

Here’s the friction nobody talks about when you’re working with AI coding agents: context management is your job. The agent doesn’t know what the last agent did unless you tell it. The agent doesn’t know which files changed unless you paste the list. The agent doesn’t know whether the critic found problems unless you copy the findings into the next prompt.

I was doing this manually. Every wave of agents meant I was reading status files, extracting decisions, formatting them into prompts, and dispatching the next wave. I was a human orchestrator performing a job that should have been automated — and losing context quality every time I paraphrased something instead of passing it through verbatim.

The worst part: I was managing my main conversation window like a scarce resource. Every long prompt I pasted consumed context. Every agent output I reviewed consumed context. By hour eight, I was making trade-offs about what to include and what to cut, and those trade-offs were degrading the quality of every subsequent dispatch.

Let me be blunt about something. If your workflow with AI agents involves you manually ferrying context between them, you don’t have an agent system. You have a faster typewriter.

What SuperPowers Gets Right (And Where It Breaks)

I’d been using the SuperPowers plugin for Claude Code — 135,000 stars, years of development, genuinely well-designed. Its brainstorming skill is excellent: one question at a time, visual companion for mockups, structured spec output. I still think it’s the best brainstorming flow available.

But the execution model didn’t match how I actually work. SuperPowers dispatches generic subagents — an “implementer” that writes code, a “spec reviewer” that checks compliance, a “code reviewer” that checks quality. Three anonymous agents per task, none of which know my codebase conventions, my testing expectations, or my language-specific style preferences.

More critically: SuperPowers writes full implementation code into its plans. Function bodies, complete test files, line-by-line instructions. A 3,000-line plan for a feature that takes 500 lines to implement. The moment the first agent diverges from the plan — and it always diverges — the plan becomes misleading documentation. In the BAARA Next project, 30-40% of plan code was modified by agents during implementation.

I wanted something different. I wanted plans that describe what to achieve, not how to implement it. I wanted specialist agents that bring their own expertise instead of following recipes. And I wanted the orchestration to happen automatically instead of through my terminal.

The Intent-Driven Principle

The core idea behind fakoli-flow crystallized during a brainstorming session about the BAARA Next execution engine. I was comparing how Temporal handles workflow durability versus how we’d handle it for non-deterministic LLM agents, and I realized the same principle applied to plans.

A Temporal workflow is deterministic. You can replay every event and reconstruct the exact state. A prescriptive plan is the same idea applied to development — replay every step and reconstruct the implementation. But LLM agents aren’t deterministic. They read the actual codebase, discover existing patterns, and make implementation decisions based on live context that the plan author didn’t have.

So why write implementation code in the plan at all?

A SuperPowers plan says:

Task 3: Implement the retry function

Step 1: Write failing test

test("retries with exponential backoff", () => {

const result = retry(failingFn, { maxRetries: 3 });

expect(result.attempts).toBe(3);

});

Step 2: Implement

export function retry<T>(fn: () => T, opts: RetryOptions) {

// ... 30 lines of implementation

}A fakoli-flow plan says:

Task 3: Retry with exponential backoff

Intent: Failed executions must be retried with increasing delay.

Acceptance criteria:

- Configurable max retries (default 3) and initial delay (default 1000ms)

- Delay doubles each attempt with ±10% jitter

- Retries exhausted → route to DLQ

Scope: packages/orchestrator/src/retry.ts

Agent: welder

Verify: bun test — retry scenarios passTen lines. The agent reads the actual codebase before implementing. If a delay utility already exists, the agent uses it. If the codebase uses a different testing framework than the plan assumed, the agent adapts. The acceptance criteria don’t change regardless of implementation approach.

It’s not a suggestion. It’s the entire design philosophy of fakoli-flow.

The Critic Gate That Changed Everything

During BAARA Next Phase 1, I dispatched three welder agents in parallel to build different packages of the monorepo. All three finished. All three typechecked. I was about to move on to Phase 2.

Then I ran the critic.

Ten MUST FIX bugs. State machine violations that would crash at runtime. An unauthenticated remote code execution vector in the shell runtime. API contracts that lied about what they returned. A heartbeat method that crashed on every single invocation because it tried to transition a running execution to running — a self-transition the state machine correctly rejected.

Every one of these passed typecheck. Every one would have been discovered in production, or more likely, would have compounded with the next phase’s code into debugging nightmares.

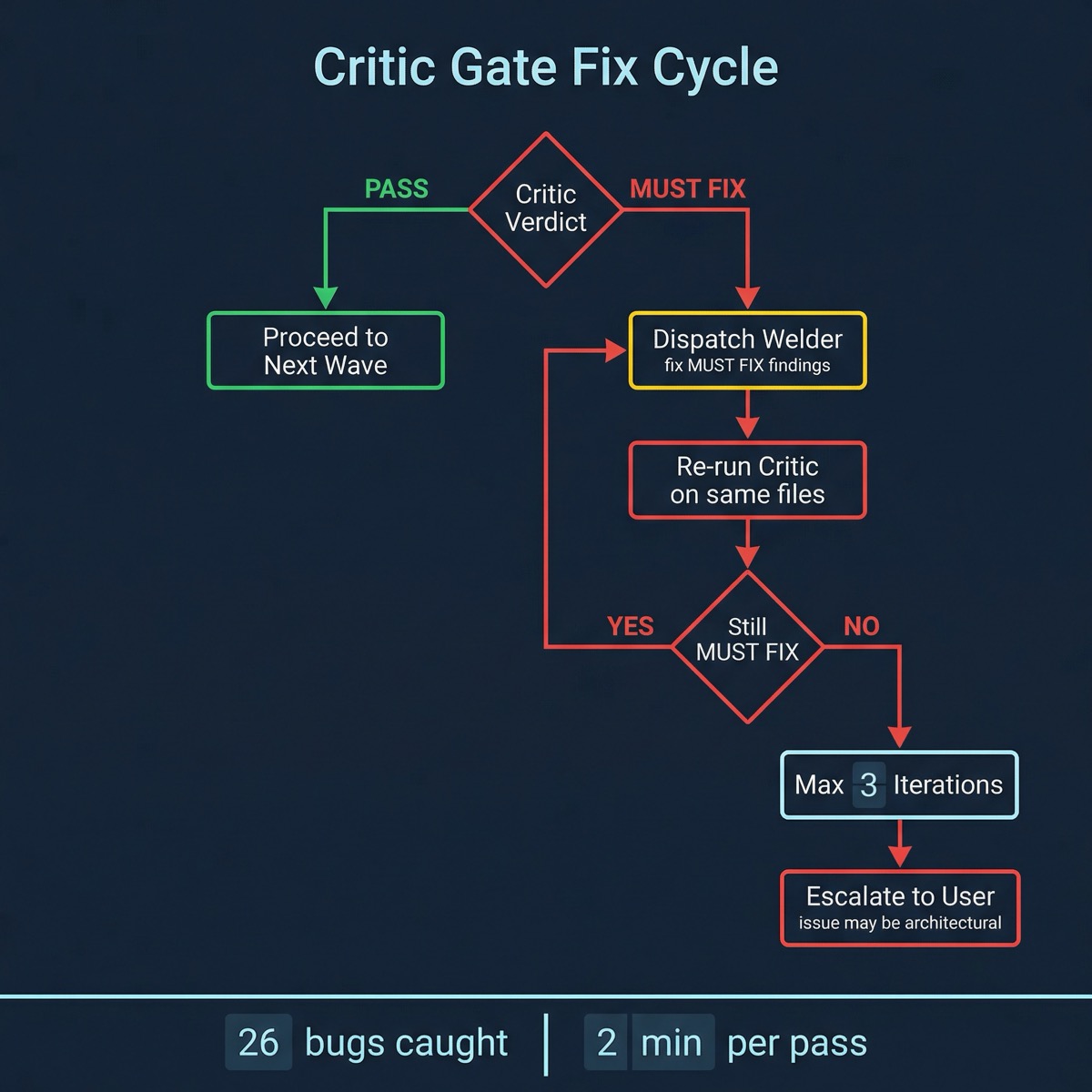

After that, I made the critic gate non-negotiable. After every wave that writes code, critic reviews everything. If it finds MUST FIX issues, welder fixes them and critic re-reviews. The wave doesn’t advance until critic passes. No exceptions.

The fix cycle is the key part. It’s not “critic finds bugs, you fix them.” It’s “critic finds bugs, welder fixes them automatically, critic re-reviews, and this loops until it’s clean or escalates to you after three attempts.”

Across six phases of BAARA Next — 44,268 lines, 218 files — the critic gate caught 26 bugs. Each critic pass took about two minutes. Each bug caught saved hours of debugging. The math isn’t subtle.

From Eight Agents to a Three-Plugin Ecosystem

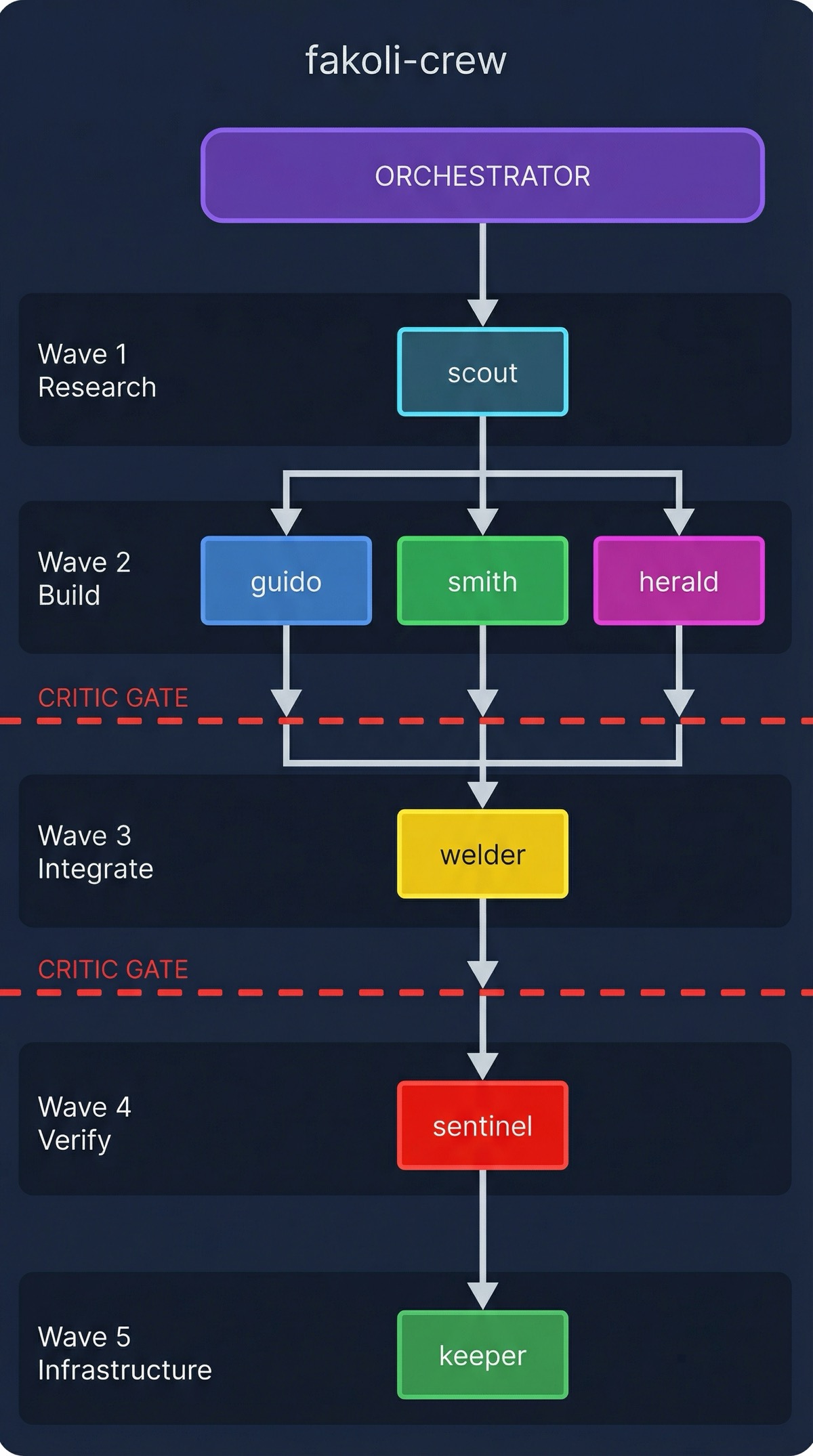

The original fakoli-crew was built for Claude Code plugin development. Eight agents, all thinking in Python, all oriented around manifest schemas and hook configurations. It worked well for that.

But BAARA Next wasn’t a Claude Code plugin. It was a full-stack TypeScript monorepo with 10 packages, a React frontend, an MCP server, and a CLI. The agents needed to work across languages, understand monorepo conventions, and apply testing discipline that wasn’t in their original prompts.

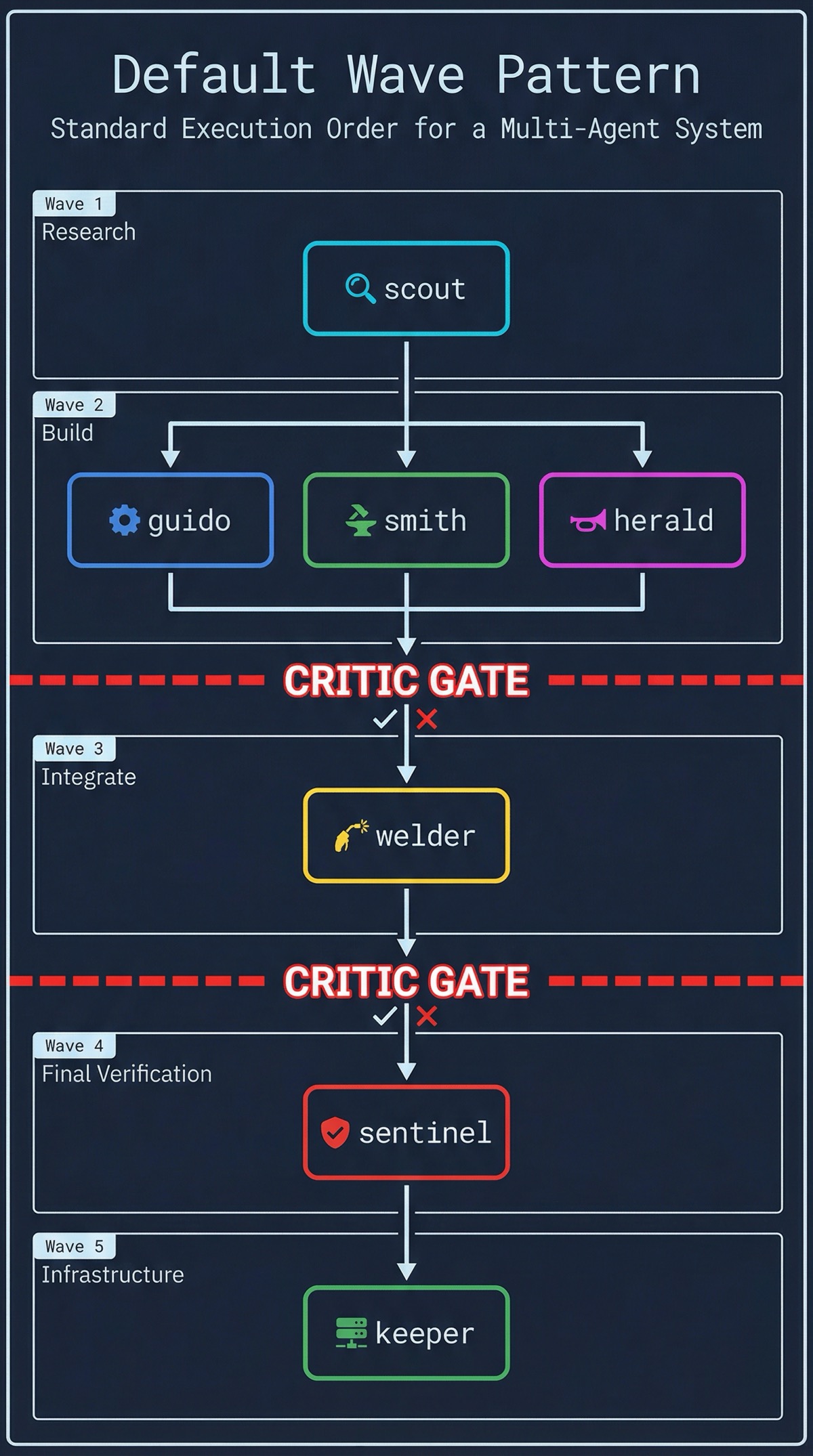

So I made them polyglot. Guido auto-detects the project language — Cargo.toml means Rust conventions from the API Guidelines, pyproject.toml means Python from PEP 8, tsconfig.json means TypeScript from the Design Goals. Welder reads a patterns reference file that shows integration techniques side-by-side across all three languages. Critic applies the Staff Engineer review bar regardless of language.

Then I built fakoli-flow as the orchestration layer — the thing that actually dispatches agents in waves, manages status files between them, and enforces the critic gate automatically. The agents provide the expertise. The flow provides the coordination.

And then I realized my systems-thinking-plugin — nine agents for infrastructure analysis — had the exact same orchestration pattern. Discovery agents in parallel, extraction in the middle, synthesis at the end. Status files for communication. Waves for dependency ordering.

Three plugins. One orchestration pattern. Each works standalone. Together they’re the full stack.

| Plugin | Role | What It Does |

|---|---|---|

| fakoli-crew | Specialist agents | 8 polyglot agents — architect, reviewer, researcher, integrator, documenter, validator |

| fakoli-flow | Workflow orchestration | Intent-driven pipeline: brainstorm → plan → execute → verify → finish |

| systems-thinking | Architecture analysis | 9 agents for infrastructure decisions: discovery → extraction → synthesis |

What I’m Still Figuring Out

I want to be honest about the limitations.

The visual companion — where fakoli-flow fires up a local web server to show mockups during brainstorming — works well when it works. But the server state tracking is fragile. SuperPowers has the same problem, and my fix (PID files, liveness checks, auto-restart) is better but not bulletproof. I’ve had sessions where the companion dies silently and I’m writing HTML files that nobody sees.

The intent-driven plan format requires trusting the agents. If the agent doesn’t have TDD baked into its prompt, it won’t write tests first just because the plan says “Verify: tests pass.” I solved this by absorbing TDD enforcement directly into welder and guido’s agent definitions, but that means the quality guarantee lives in the agent, not the plan. Swap in a generic subagent and the discipline evaporates.

And the biggest open question: does this scale beyond one person? I’m the orchestrator, the user, and the quality reviewer. When I dispatch a crew and walk away, it’s my judgment at the design phase and the critic’s judgment at the review phase. I’m not sure yet whether this works for a team of humans dispatching shared agent crews, or whether the status file coordination protocol needs something more structured than markdown files in a docs/plans/ directory.

The Transferable Principle

The friction I was trying to eliminate wasn’t about speed. The agents were always fast. The friction was about me — being the bottleneck, managing context windows, ferrying decisions between agents, pressing buttons every time something finished.

The fix wasn’t making the agents faster or smarter. It was making the orchestration automatic and the plans shorter. Describe what you want. Describe how you’ll know it’s done. Let the specialists figure out the rest. Verify after every wave. Ship when the critic says it’s clean.

That’s intent-driven flow. It’s not a framework. It’s a way of thinking about what you actually need to say to get work done — and what you’ve been saying that was never necessary.

Co-authored with AI, based on the author's working sessions and notes.

Explore the source

fakoli/fakoli-plugins

This article discusses an open-source project. Star it, fork it, or open an issue.